Financial crime has become AI-native.

Criminal networks use generative AI for parallel operations: synthetic identity creation, automated phishing campaigns, deepfake document generation, coordinated account takeovers. They deploy AI to optimize transaction patterns that evade detection. They operate at a scale and speed that manual compliance processes cannot match.

The compliance industry is responding. Surveys suggest 70% of financial institutions are exploring AI for AML purposes. The recognition is widespread: you cannot fight AI-enabled crime at scale with spreadsheets and static rules.

But exploration is not deployment. The gap between them is explainability.

The agentic defense model

Legacy compliance systems evaluate transactions against predetermined rules. They generate alerts. Humans investigate. The system provides flags; humans provide reasoning. Agentic AI systems operate differently. They gather evidence autonomously and they reason through cases. They document their logic at each step. They manage workflows end-to-end: from initial detection through investigation to resolution. This is not automation in the traditional sense. Automation executes predefined procedures faster. Agentic systems exercise judgment within defined parameters, adapting their approach based on what they find.

For compliance, agentic AI means systems that can:

- Investigate alerts by gathering relevant context from multiple sources.

- Assess risk based on behavioral patterns and historical comparisons.

- Generate documentation that explains the reasoning.

- Escalate to human review with evidence already assembled.

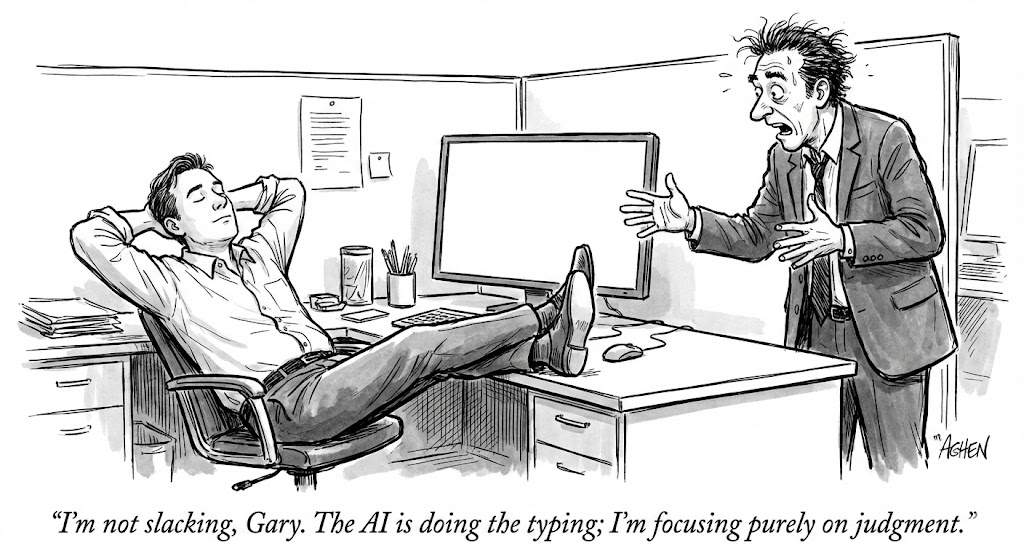

The analyst's role shifts from data gathering to judgment validation. The system does the work; the human confirms the conclusions.

Why regulators are skeptical

Regulatory guidance on AI in compliance is consistent: explainability is non-negotiable.

The OCC, Federal Reserve, and other supervisors have made clear that "the model said so" is not an acceptable explanation. Model risk management requirements (SR 11-7) apply to AI compliance tools. This means documentation of model design, validation of performance, ongoing monitoring, and governance processes.

When an AI system flags a transaction, the compliance team must explain why. When an AI system closes an alert, the reasoning must be documented. When regulators examine the program, they want audit trails that show how decisions were made.

Black-box AI creates regulatory risk even when it performs well. If you cannot explain the decision, you cannot defend it.

The explainability requirement shapes architecture

Building AI that regulators trust requires designing for explainability from the start:

- Step-by-step reasoning. Every decision should produce a trace showing what evidence was gathered, what factors were considered, and how conclusions were reached.

- Evidence linking. Conclusions should reference specific data points. "Customer flagged for unusual activity" is insufficient. "Customer's transaction volume increased 340% month-over-month, with 12 new counterparties in high-risk jurisdictions, while business type indicates no seasonal pattern" is defensible.

- Audit trail preservation. Retain the complete decision history: what the system knew, what it decided, and why. This must be reconstructable months or years later when examiners ask questions.

- Human oversight integration. Agentic systems should escalate appropriately. High-confidence, low-risk decisions can proceed with documentation. Novel patterns, edge cases, and high-stakes decisions require human review.

What this means for adoption

The firms that will benefit from AI in compliance are those that treat regulatory requirements as design constraints. Explainability is an architectural requirement.

The firms that will struggle are those that deploy powerful AI first and figure out explainability later. The examination question is coming. Regulators will ask how your AI makes decisions. "We're working on that" is not an answer.

AI-native financial crime is here and agentic defense is the response. The firms that deploy it successfully will be those that build trust with regulators through transparency, documentation, and evidence-driven reasoning.